6 Testing best practices and ideas for your email marketing

Do you follow email testing best practices?

Even with the best email components and the most efficient campaign execution, some email marketing efforts fall flat. Testing emails can help you figure out which parts of your marketing campaign need improvement.

Before we go further, let's review the basics. We'll talk about the different types of email testing, how to perform split testing, and how to interpret email test results.

What are the different types of email testing?

There are two main types of email testing.

Some are tests you put emails through right after crafting them (but before sending them).

Other tests can’t happen without sending emails to at least some of your subscribers. The purpose of these types of email tests is to gather data to help your current emails perform better. They also allow you to create better emails in the future.

Quality assurance tests

At the very least, quality assurance testing for emails will help you avoid the worst outcomes before they have a chance to happen.

Here’s a list of email tests you can perform before executing marketing campaigns.

-

Link validating: Test every single link included in your email. Without URLs pointing to the correct locations, your marketing campaign won’t be effective and won’t lead to conversions. Broken and incorrect links won’t do your brand’s reputation any good and can result in a bad experience for your subscribers.

-

Spam testing: Your best efforts will go to waste – and land in numerous spam folders – if you skip this step. If email service providers mark your messages as spam, you may face repercussions. Down the line, these will hurt the deliverability of your emails.

-

Design testing: This will check format and image rendering across different apps and devices. Some email marketing services (EMS) have automated tools for this functionality built right into your account. It's often bundled with an in-house spam testing feature.

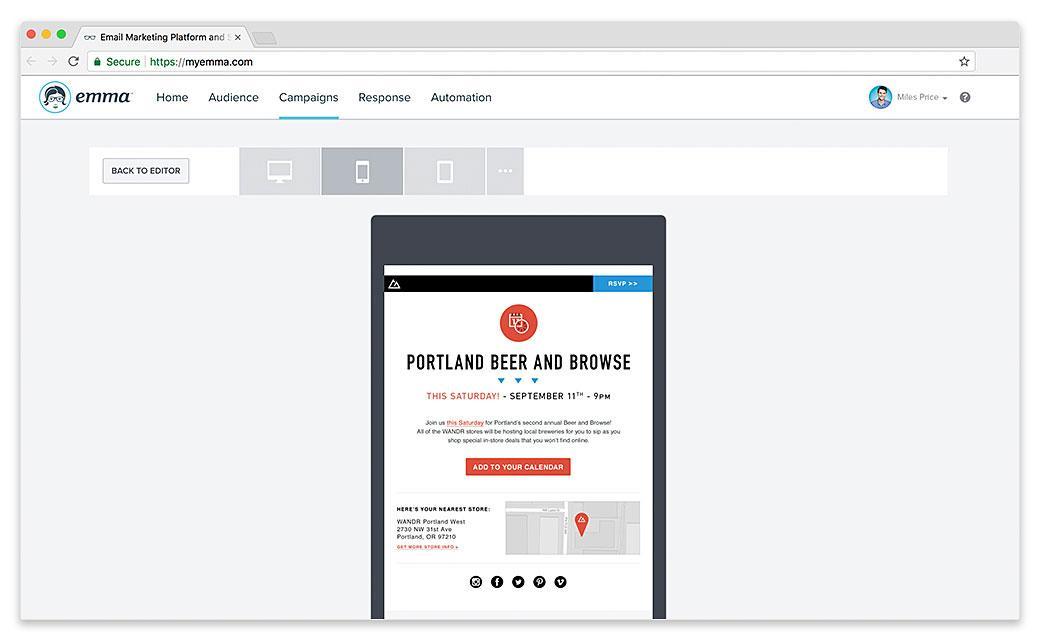

A very convenient way to conduct design testing is through looking at inbox previews provided by your EMS.

Source: Emma

Variable tests

You can choose to perform only quality assurance checks or only variable testing, but this practice may not yield the best results. Instead, it’s much better to make space in your schedule for both. Going with your gut instead of conducting email tests will often result in more time-consuming trial and error.

One of these two tests is much more popular and widely used than the other. Let’s start with the less common one:

-

Multivariate testing: Marketers use this type of email testing to test several different variable combinations at once. The goal is to find the best possible set of variables to ensure the success of the email campaign.

-

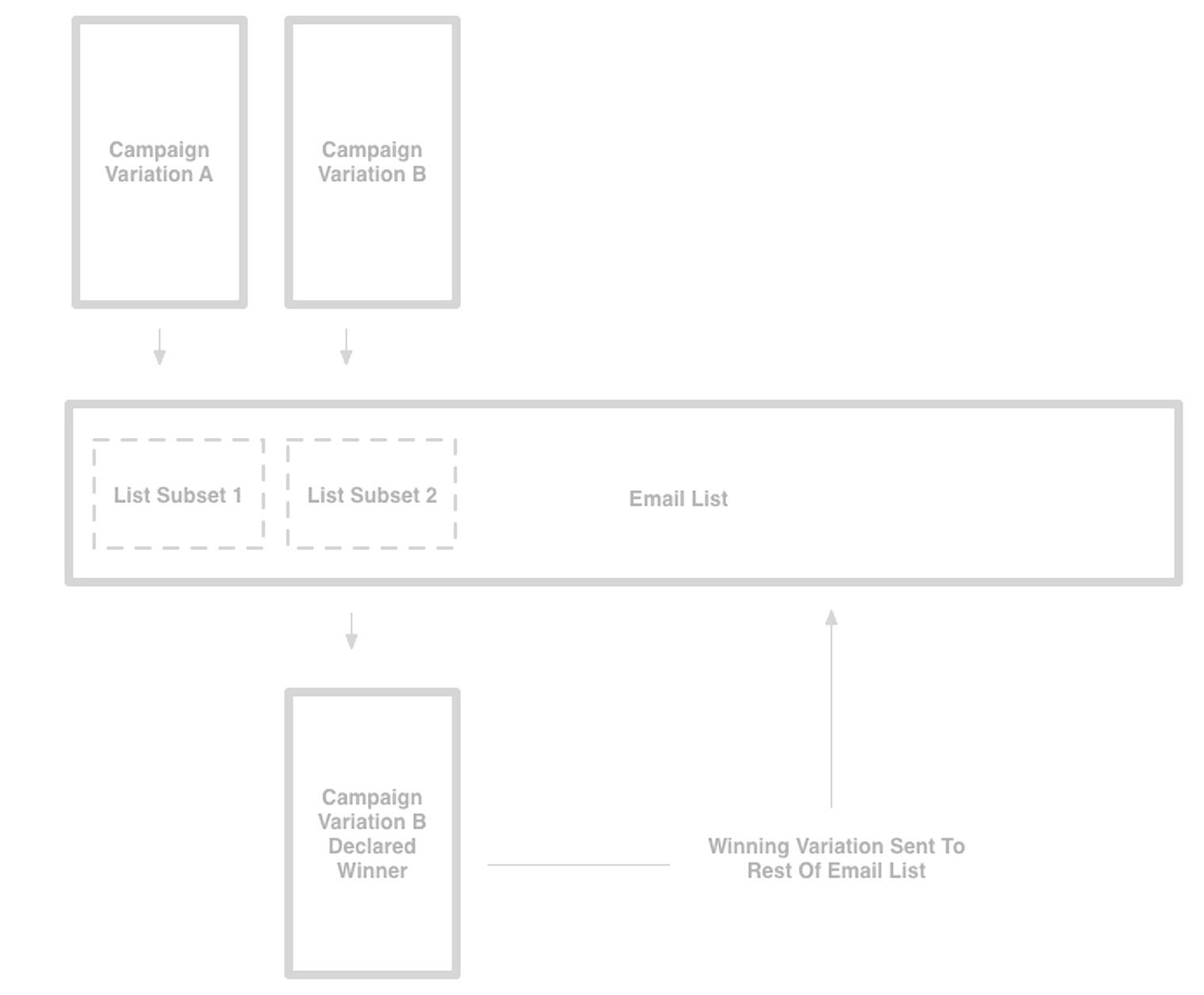

A/B testing: Also called split testing, the strategy can apply to almost all subdisciplines of marketing. For email, it works by sending one variation of your email to a smaller group within your email list. A second variation goes to another group. The reactions of these smaller groups to your emails determine which version is better. After this step, you may tweak your current campaign before sending the improved email to your entire list or larger intended segment.

Source: Campaign Monitor

Variations in A/B testing refers to single variables changed at a time. This is the main difference between it and its multivariate counterpart. Split testing is effective in finding out which version of a message will increase key performance indicators (KPIs).

Which email components can you change in email split testing?

As long as an element is part of the email marketing campaign you’re set to execute, you can make it an A/B testing variable. However, remember that split testing allows for only one element change at a time.

Interested in gathering data from more than one changed element? You can always run a second A/B test after you determine the best variation from the first test.

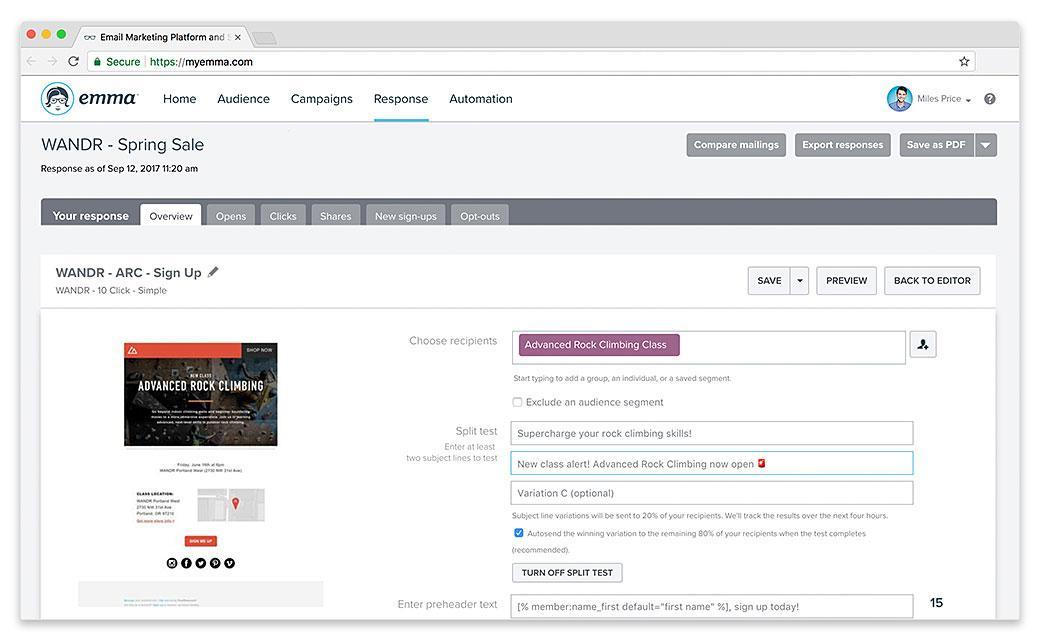

Source: Emma

Below are some common email elements that can benefit from split testing:

-

Subject line: As you can see above, Emma’s A/B testing tool makes it possible to try two different titles on a relatively small subset of your email list. Start split testing with this variable if you want to raise your open rates. Consider that email titles using emojis may experience 56% higher open rates and personalized ones are 26% more likely to get opened.

-

Preheader: This is often pulled from the initial line of your email body. If you have the know-how and the capacity to change this part, do so – especially if your email list is mostly Generation Z (Gen Z). Nearly every Gen Z-er owns a smartphone, which means they’ll see whether your preheader is intentional or not.

-

Email copy: When testing this component, you may try changing design and layout elements like font or placement. You can also make one variation more concise, personalized, or urgent-sounding.

-

Images: More than half of email users check their inbox on their mobile devices, so split test your file size and optimization if your email is image-heavy. It’s also good to remember: Only 30% of an email’s space should go to visuals.

-

Call-to-action (CTA): A better CTA means better click-through rates and more potential to increase conversions. Try working with different CTA button colors or different text lines.

-

Send time: There’s no universal best time to send campaign emails, so this variable is a commonly tested one. Much of this depends on how well you know your subscribers and their habits.

How do you translate A/B testing results into actionable input?

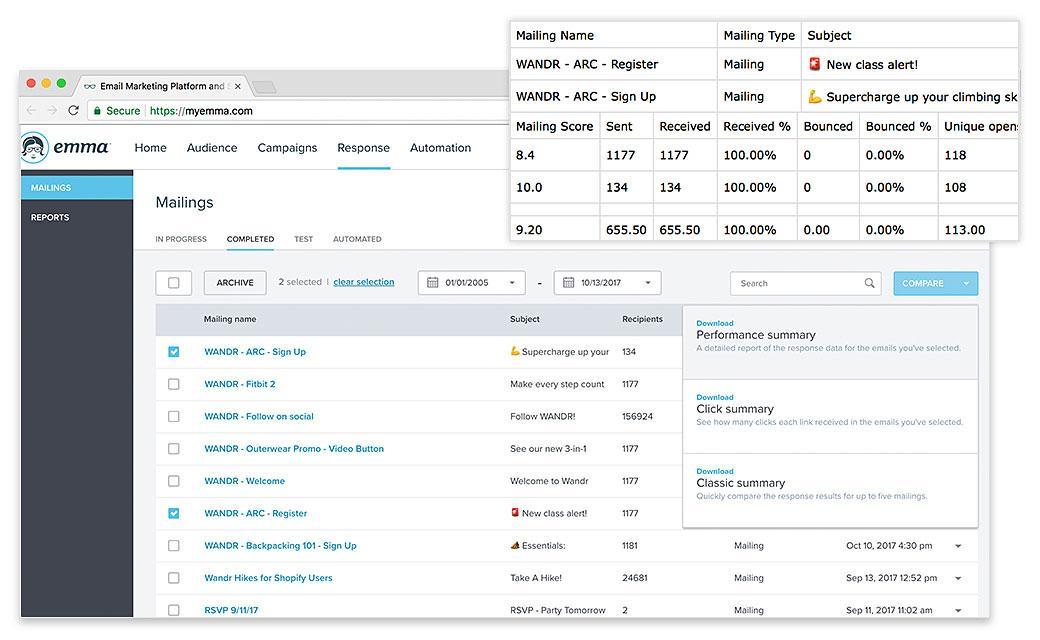

If you work with an EMS, chances are the platform will have a way to show important data in an organized fashion. For example: Emma collects the results of multiple mailing instances in one spreadsheet, from which you can generate summaries or check detailed reports. You can see this in action below.

Source: Emma

You need to know your current metrics before conducting split tests. Take note of open rates, click-through rates, and any other stat you want to boost.

These will be the basis of your A/B testing hypothesis. Focus on one or two metrics that you want to improve, and then pick an email component to change. The latter decision should rest on careful observation and sound speculation.

Here’s an example: Your open rate has been declining over the last four email campaigns you’ve executed. You think it’s because your subject line is formulaic and safe. During split testing, you try a new approach to crafting email titles. If your open rate improves, it means your hypothesis was likely correct.

What comes next after a proven hypothesis? Applying the new approach to the mass sending of the email campaign and in crafting future campaigns, of course.

What are some email testing best practices to keep in mind?

Here are six split email testing best practices to keep your process more focused, efficient, and productive.

1. Have an end goal

Don’t just form a hypothesis. Identify your end goal, too.

To continue the sample situation earlier: If your typical open rate (before it started to decline) was 25%, do you aim for that or a little higher? Name the number and stick to it.

Split testing allows you to learn more about email marketing and your subscribers during the process. Its purpose is not solely to increase stats.

2. Conduct simultaneous tests

Run both variations of a split test at the same time. If you do another round of split testing to improve on your previous “winner,” run that test under similar conditions, too. Not doing so can lead to tests having wildly inaccurate results.

3. Keep a control version

A/B testing works best if one of the variations is your original email campaign. This is your real and reliable baseline. If you want to test more than two variations, conduct separate A/B tests for each. This will make confounding variables easier to spot.

4. Act on statistically significant results

Perform email testing on the largest subset of your email list as you can. This will lessen the probability of random chance or error and increase the accuracy of your results.

You may also consider letting your test run longer. Some of your subscribers may not open their emails the moment they hit the inbox.

5. Test continuously and test often

This doesn’t cancel out having an end goal. When you reach your goal, implement the change proven effective by testing. However, think of new hypotheses when you have a moment to spare. Running A/B tests in the background all the time with different variables changed can lead to highly optimized email campaigns months down the line.

6. Trust the data

Don’t ignore the results when they come in and don’t choose your gut over empirical data. Don’t wait until your email list evolves and your subscriber behavior and demographic changes completely before getting around to your action plan.

Wrap up

There are two main types of email testing you can perform: quality assurance checks and variable tests. A/B or split testing is a very helpful example of the latter.

With A/B testing, you can test a variation of one element of an email against another – preferably your original work. The test exists to prove or dispel a hypothesis about the performance of your email campaign.

Below are six email testing best practices:

-

Have an end goal

-

Conduct simultaneous tests

-

Keep a control version

-

Act on statistically significant results

-

Test continuously and test often

-

Trust the data

Have you ever sent an email that you wish you could redo? Don’t find yourself in that situation again – use our preflight checklist for email campaigns.

MOST RECENT ARTICLES

Want to engage your audience and grow your brand? Try Emma's robust easy-to-use product today.