The Ultimate Step-By-Step Guide To

Improving Your Email Results

with Jay Baer

Convince & Convert

My friend Tom Webster from Edison Research has a fantastic saying: “the plural of ‘anecdote’ is not “data.” But so many email programs today are governed by what we believe to be true based on stories we’ve been told, or stories we choose to tell ourselves. Even now, in 2018, with a cavalcade of available data the size of Jay-Z’s ego, we still aren’t often managing email in a fully data-driven way.

It's said that, "if you cannot measure, you cannot improve.” That’s not entirely right. I would say it this way: “if you don’t measure, any improvements you make are probably a happy accident.” The success of any email marketing program should rely heavily on your ability to carefully gauge both your successes and your mediocrities. By monitoring the most important aspects of your email programs and individual sends, you can make well-informed decisions about the elements that require alterations and adjustments. Measurement always precedes improvement, otherwise you’re just shooting random bullets in the air, hoping a bird flies by simultaneously. It might work, but not reliably.

You might think “if it ain’t broke, don’t fix it.” Maybe your email is pretty darn good already? If so: high five. But a common fallacy is that successful email programs are self-sustaining. Email is not a “set it and forget it” system, even when it’s working. Like Mariah Carey, your email marketing demands regular maintenance. Consistent attention and a constant desire to create a more meaningful connection between brand and customer are prerequisites to a profitable email program.

In this Ebook, you will learn how to:

A. Identify the key metrics for your program

B. Accurately diagnose program weaknesses

C. Plan and enact changes to improve your email marketing performance

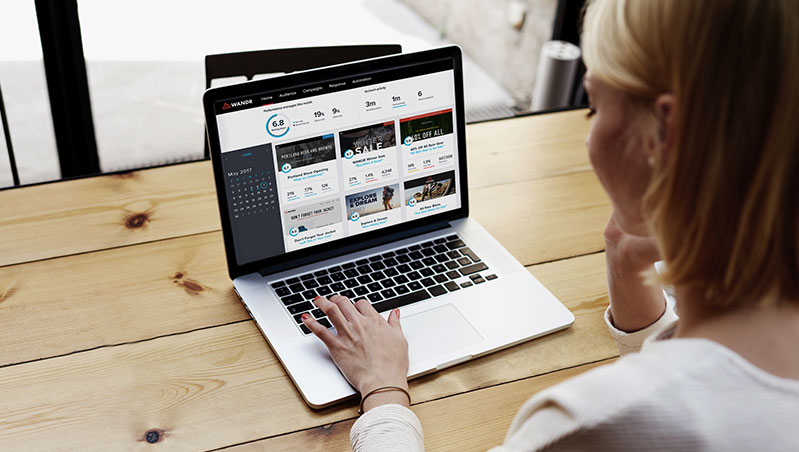

D. Adopt a never-ending approach to enhancing email metrics using Emma

Step 1

Establish a Solid Foundation for Email Metrics

Failure to plan is a plan to fail. If possible, identify your key metrics before launching your email campaign. If your email program is already in progress, and it probably is, it’s never too late to make sure you’re using the best possible success scoreboard.

The challenge with identifying key email metrics is that one size does not necessarily fit all. The metrics you rely upon will be as unique to your program as your design, message, and call to action. There is no MAGIC email success number. Whatever metrics you choose to embrace, the best scenario is that they match up as much as possible with your actual business objectives. Because, let’s be honest, the goal isn’t to be good at email; the goal is to be good at business because of email, right? Here are some sample scenarios to explain what I mean.

Rachel is a content marketer for a software company, so she and her team create and publish content to help inform her audience. Rachel uses email to promote new articles, videos, and graphics as they are developed.

Recommended Key Metric:

Clicks to content (total clicks and percentage of recipients who click)

Explanation:

Since Rachel’s objective for her email program is to distribute content marketing assets to her customers and prospects, she can gauge the success of her program by monitoring the number of clicks to content promoted within each campaign, as well as click rate. While other metrics related to opens and unsubscribe activity are important, click activity and email content engagement are the primary success indicators for Rachel’s program. You go, Rachel!

Tyler works for an ecommerce company that sells smartphone cases. Tyler secretly hates Apple for changing the size of their phones about every twenty minutes. As it relates to email, however, Tyler uses it to encourage new and returning customers to purchase phone cases online, instead of at the crappy mall kiosk. Tyler sends email to his subscribers when new cases become available and provides special offers and discounts.

Recommended Key Metrics:

Clicks to product links and sales from email.

Explanation:

Since Tyler’s goal is to encourage purchase activity among his subscribers, his key metrics include clicks on individual product links, as well as total sales generated from his campaigns. In this scenario, Tyler can rely upon Emma for his click metrics, view purchase activity from email through an integration with his ecommerce platform (like Shopify or Magento), and track post-click activity on his ecommerce website. Tyler is all about the cash.

Sylvia is a real estate agent who informs her clients and prospects about available property listings on the market. She sends emails regularly that feature photos, specs, and pricing details for homes in her area.

Recommended Key Metrics:

Open and click activity from key prospects.

Explanation:

Overall open and click rates are useful to Sylvia as she evaluates the response to her campaigns, but to accomplish her business goals she must determine which key prospects indicate interest based on their open and click behavior. Armed with knowledge about who opened and what home listing they clicked, Sylvia can make smart decisions about how she follows up with contacts. She is using email interaction behavior as an indicator of interest. Sylvia is smart like that. If she sends out a profile of a six-bedroom home, the people that click through to look at the photos probably need some space. Conversely, people with five kids are unlikely to look at an available high-rise, one-bedroom listing.

Andres is the curator of a local museum. He uses Emma to help him keep museum members and previous visitors informed of upcoming exhibits. Andres uses a newsletter format for all email campaigns, which he sends at monthly intervals.

Recommended Key Metrics:

Open rates and click rates over several months.

Explanation:

Given the nature of Andres’ newsletter style, it will be crucial to monitor the interest level of his subscribers by reviewing open rate, click rate and unsubscribe activity habitually. Email campaigns sent according to a regularly scheduled cadence must be measured over the long-term to ensure recipients are still motivated to open and engage with each delivery. After all, Andres needs to keep the museum top of mind, and entice return visits when exhibitions change. But he has to make sure his subscribers open and read his emails when it’s time for a new exhibit.

Your Best Possible Benchmark: You

As you begin to define what success looks like for your email program, you may consider reviewing studies and data that show email marketing benchmarks for your industry. Benchmark reports can provide a good starting point to gauge your performance against others in your niche field. But a word of caution: While these studies can be helpful, they also lack context for your unique email program and marketing objectives. The best benchmark for any upcoming campaign is a previously sent campaign. Your ultimate standard for success is whatever result you achieved with your most recent email send. And really, if all you care about are averages, you’ll never be anything other than an average marketer.

Mistaking Weather for Climate

As noted in Scenario D above, many email programs should be judged as a whole and not as individual pieces. I have a sign in my office that reads: “Remember: some days you’re the pigeon, and some days you’re the statue.” This is good advice for email senders. You’re not as good as your most successful campaign, and you’re better than your least successful campaign.

Don’t allow one sucky send to drastically alter your long-term strategy. Stay the course, and gauge the success of your program over time. Make adjustments to your email strategy based several campaigns, not one-off occurrences. On at least an annual basis, consider conducting a full review of your email program strategy with the team at Emma.

Step 2

Watch & Learn

After setting a foundation for email measurement by selecting key metrics derived from program goals and objectives, it’s time to start monitoring performance. Whether you are just starting out or applying a newly defined measurement process to an established email program, give your email marketing the necessary time to form a trend. Rash judgments formed with limited data can lead to poor results.

Email and The Scientific Method

Whether you realize it or not, you have likely been using the Scientific Method since the fifth grade, and there is no better way to make your email better. When applied to your email program, the Scientific Method may look a little something like this:

Ask A Question:How can I improve my key email marketing metric(s)?

Gather Data:Review metrics from previously sent campaigns, if available. Make observations from campaigns sent most recently. Identify trends specific to your key metric(s). Document high and low levels for key metric(s).

Form a Hypothesis:Develop a general theory about how slight modifications to your messages, design, cadence, or program will lead to improved results. Also, speculate about what you can expect if no changes are made.

Do an Experiment:Choose one element to alter according to your hypothesis and test it against your audience. More on this later.

Analyze Your Results:Review mailing response metrics and test results. Determine if your hypothesis was true or false.

Report Results:Collect and document test experiment results to inform future campaigns and hypotheses.

While the Scientific Method offers us a simple recipe that is easy to follow, it also requires quality ingredients. Poor or limited data can lead to shoddy decision-making and lackluster results. We all want to generate big results as fast as possible, but give your program the necessary time and space (e.g. three to four campaigns with minimal alterations) to form a recognizable trend that demands attention.

When using this Scientific Method, make sure you write down your hypotheses (C, above). This helps you keep a specific focus on what you think will work. For example: “I believe that sending this email at 4pm will improve open rates by 20% in comparison to my current send time of 11am.”

In addition to his line about anecdotes not equaling data, my friend Tom – he’s a professional market researcher and analyst – says: “Good markers use data to prove themselves right. Great marketers use data to prove themselves wrong.” I want this on a poster.

Step 3

Email Analytics Assessment

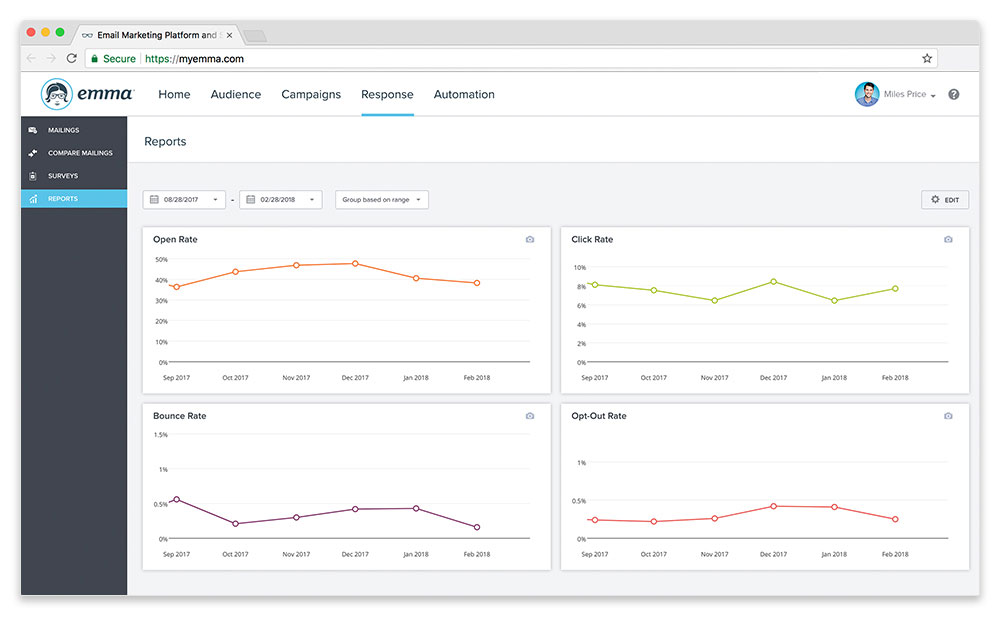

Your business objectives should help determine your key metrics. In a similar fashion, the type of email program you’re running should dictate what improvements you make. For example, a newsletter program that seeks to hold the subscribers’ attention and keep them informed over time should try to grow email open rates and click rates.

But if you’re running an automated campaign to encourage action from a subscriber in a progressive, step-by-step manner across multiple emails, you should focus on finding weak links in that nurture sequence, the same way we play “which is the worst Kardashian?”

Participation declines from message to message are much more substantial and deserving of attention in a short-term automated program, than in a long-term newsletter program.

Once you spot a legit trend, you can start to make alterations. The hard part is figuring out exactly what metrics matter, and what to do if you see a problem or decline.

Let’s start by describing the most common success metrics.

Key Metrics Defined

Delivery Rate Successful deliveries as a percentage of list size.

Open Rate Number of subscribers who open as a percentage of emails delivered.

Click Rate Number of clicks within an email as a percentage of opens.

Unsubscribe Rate Number of recipients who unsubscribe as a percentage of emails delivered.

SPAM Complaints Total number of recipients who mark the email as “SPAM” or junk for each email send.

Active Ratio Number of email recipients who consistently open and interact with emails as a percentage of list size.

Post-Click Activity The volume of leads generated, products sold, or other brand-specific objectives completed as a result of email marketing to a targeted audience. � Note: Metrics for post-click activity are usually available within a website analytics (e.g. Google Analytics) or ecommerce analytics (e.g. Shopify) platform.

Medicine for your Metrics

Once you’ve got a handle on your email weaknesses, you can choose the appropriate medicine. The table below is designed to help you select one or more solutions to your problems, like CVS or Walgreen’s, but for email.

Medicine Instructions for Use

List Validation This step is recommended when the integrity of the list is in question. List validation allows email marketers to verify that all email contacts are legitimate. Third-party services/tools like BriteVerify, DataValidation, or XVerify can provide validation to improve delivery rate and overall list hygiene.

Subscribe Process Review When bounce rate, unsubscribe rate, or SPAM complaints escalate, it is best to hit the “pause” button and retrace the steps taken to sign up new email recipients. List quality is damaged when your email arrives like a surprise, uninvited party guest. This is like when Apple (them again) decided to just add an entire U2 album to everyone’s iTunes without asking. Conduct a thorough review of your subscription sources to confirm all subscribers have the expectation that they will receive messages from your brand.

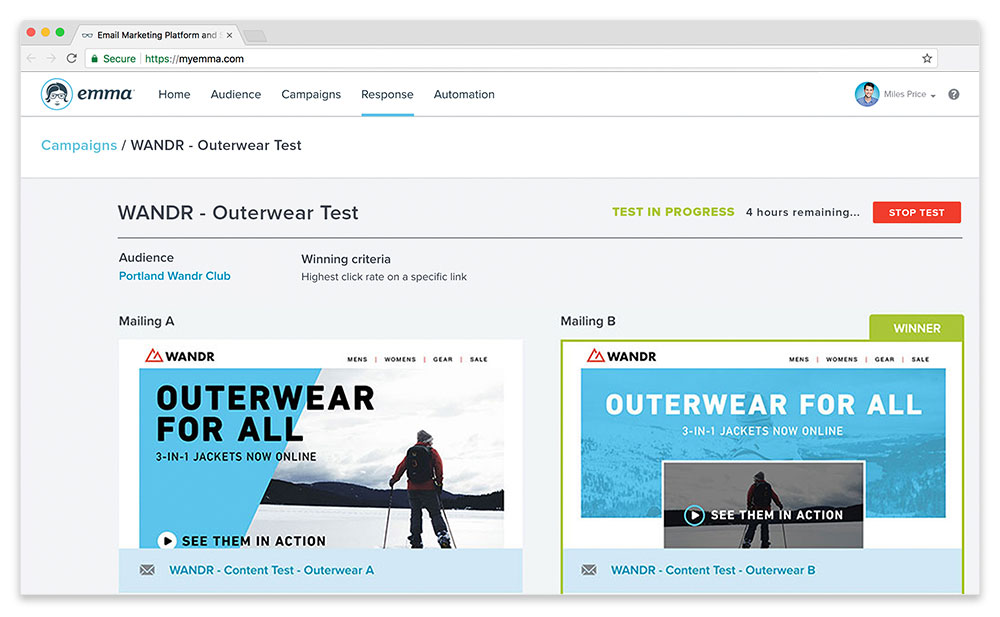

Subject Line & From Name Testing With Emma, you can conduct Subject Line or From Name testing to improve your open rates. Test only one element at a time (either Subject line or From Name) to configure a true A/B split test. More on testing later.

List Segmentation If your message is not hitting the mark for all of the subscribers on your list, consider segmenting the message into meaningful groups. Relevancy is the killer app. Delivery of a more unique and specific message to smaller groups of subscribers can boost relevance improve results. The dividing lines upon which you segment the audience are only limited by the information you are able to collect from them. For instance, you can segment by location, product interest, demographics, content preference, previous interactions with your email messages (e.g. recipients who clicked a link in an email vs. recipients who did not), etc.

Content Alignment Did you hear about the woman that tried to bring an emotional support PEACOCK on a plane? It doesn’t make any sense, right? That kind of incongruence can occur in your email program, as well. Do you have promising open rates, but dwindling click behavior, and/or do your stats show good click volume, but subscribers bail out once they hit your website? If so, your email program could be suffering from content dissonance. Review your entire send process from your subscriber’s perspective and make sure there is a natural harmony from subject line to email content and everything that follows. Eliminate or edit anything that feels like it’s out of place throughout the journey from inbox to email to landing page.

Call to Action Even your most loyal and passionate subscribers have limited time and attention to interact with your message. You know what nobody says? “I sure wish I could get more email.” Keep your message simple and its purpose clear. Add a call to action or modify your existing ones so that the recipient’s next step is super obvious.

Content Testing If email subscribers open your messages often, but clicks are hard to come by, consider content testing. With Emma, you can do an A/B test to determine the true impact of modifying a single content variable. Choose your content testing elements wisely. Maybe you could use a better photo? Maybe the style or placement of the links could be improved? These elements can have the most significant impact on click rate and click volume.

Layout/Design Alterations Another option for improving email engagement is to alter the overall look and feel of your email layout. Subtle modifications to design can influence email metrics significantly.

Re-engagement When a portion of your subscribers tune you out repeatedly, a re-engagement campaign may be the best solution. These campaigns seek to answer one simple question, “Would you still like to receive emails from our brand?” � A well-executed re-engagement campaign can often result in A) renewed interest or B) a natural list cleansing to remove previous subscribers that are no longer fully engaged. Re-engagement campaigns rely on list segmentation (see above) to identify subscribers who have neither opened nor clicked any of your most recent campaigns. Note that the benefit of a re-engagement campaign is to remove uninterested subscribers before they mark your message as “SPAM.”

Step 4

Email Stimulus & Mailing Response

Marketers have a wide range of theories and opinions about which email elements have the biggest impact on results. However, the only opinion that truly matters is that of the email recipient. Subscriber behavior provides the best counsel for how a message should be modified.

Using Emma’s A/B split testing feature, you can learn what resonates most with your audience by trying new Subject Lines, From Names and content elements (i.e. headline, primary image, supporting text, call to action, etc.) within the email message. Your approach to testing should be iterative whereby each test informs the next experiment. Small gusts can eventually lead to heavy winds of change.

A Note on Statistical Validity

Size of list doesn’t matter much, but it does inform how you test. If you have hundreds of subscribers instead of thousands, your testing process may suffer from a small sample size and a lack of statistical significance. If you ask three people if they thought “Dude, Where’s My Car?” was a great movie, and one, undiscerning person says “yes” the data show a 33% recommendation rate. However, when you ask 100 people about the same movie, you might get just three fans overall, making the rate just 3%. The smaller the tested audience, the bigger swings you’ll see in results. So, your population size (i.e. number of subscribers or recipients) has an impact on the reliability of each test and whether you can expect a test result to repeat itself as a true indicator of reality. No matter, Emma believes that the testing process reveals helpful insights regardless of the sample size. Just know that larger lists and a repetitive testing process may be required to arrive at a truly valid result.

Kaizen and Continuous Improvement

The Japanese word kaizen roughly translates to “change for better.” Organizations across the globe have adopted the kaizen philosophy of continuous improvement and applied it most commonly in the areas of manufacturing and mechanics. But kaizen can be applied to email marketing. The approach utilizes a Plan-Do-Check-Act cycle that repeats over and over again, leading to the best results possible over time. The process might look like this:

Plan:Determine what impact altering From Name has on open rate.

Do:A/B split test use of the brand name vs. the spokesperson’s name in the From Name field.

Check:Review results of the test to find that the spokesperson’s name produces a higher open rate.

Act:Apply the From Name change ongoing.

Plan:Determine if the same From Name alteration has the same impact with other lists and/or audience segments.

&and so on.

Improve Upon Previous Improvements

The improvement process knows no end. You can always find ways to improve your email results. The cycle should continue to solve for one issue before moving on to the next (e.g. figure out your low open rates before attempting to improve a lackluster click rate).

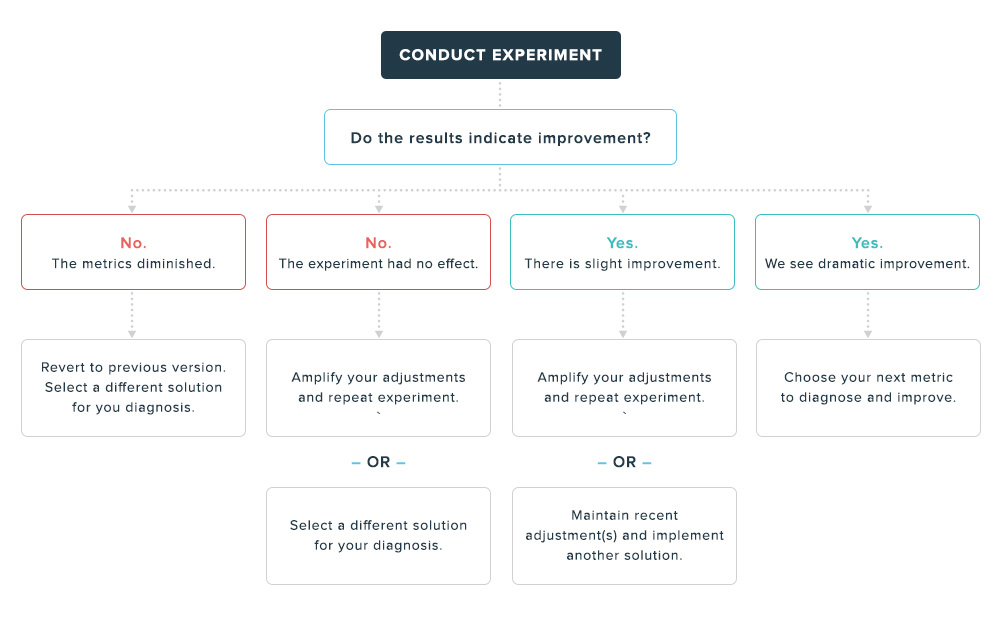

The decision tree that follows will help you determine whether to remain in the same Plan-Do-Check-Act cycle or to progress on to finding a remedy for a separate program weakness. If you need assistance at any time with planning your next move for your measurement and testing process, please reach out to the Emma services team.

Step 5

Conclusion

Just like any other measurement process, the number and variety of mailing response metrics is never in short supply. Your challenge is to determine the metrics that are most meaningful for your program. Place special importance on the metrics that reflect the business objectives (i.e. customer retention, increased sales revenue, improved lead conversion rates, etc.) of your email marketing operations. A solid foundation for your measurement process should be established as soon as possible.

The typical email marketing job description likely involves skills related to list configuration, design, content development, and delivery. But measurement is also a crucial component to any email marketing operation and relentless progress monitoring should be considered a good habit as opposed to a best practice. Your program’s mailing response metrics will always be your guide. Subscriber behavior helps us determine the changes required and the alterations that can be made with purpose.

With most email measurement processes, it’s important to take the long view. Show patience and allow time for real trends to take shape and inform your approach to continuous improvement. Plan to make alterations at a campaign level in an iterative fashion, but revisit macro adjustments for your entire program at regular (monthly, quarterly, or annual) intervals. To make massive advancements with your email marketing, you must adopt a culture of measurement and experimentation. And remember, never stop improving. Good enough is not enough.

Continue reading the guide!

No thanks, just take me to the content please.

We use cookies to serve personalized content and targeted advertisements to you, which gives you a better browsing experience and lets us analyze site traffic. Review our cookie information to learn more. You can manage your cookie preferences at any time.